DiffUSE January 2026 Retreat: From Coast to Coast, Diffuse Scattering Reproduces

Why This Retreat Mattered

In late January, the DiffUSE Project team gathered in person for our first progress meeting at Astera’s headquarters in Emeryville, California. Since our October online meeting, every team has made substantial progress.

The retreat brought together team members working on data collection, data processing, molecular dynamics simulations, machine learning modeling, infrastructure, and open science to assess progress against our six-month goals and chart the path forward.

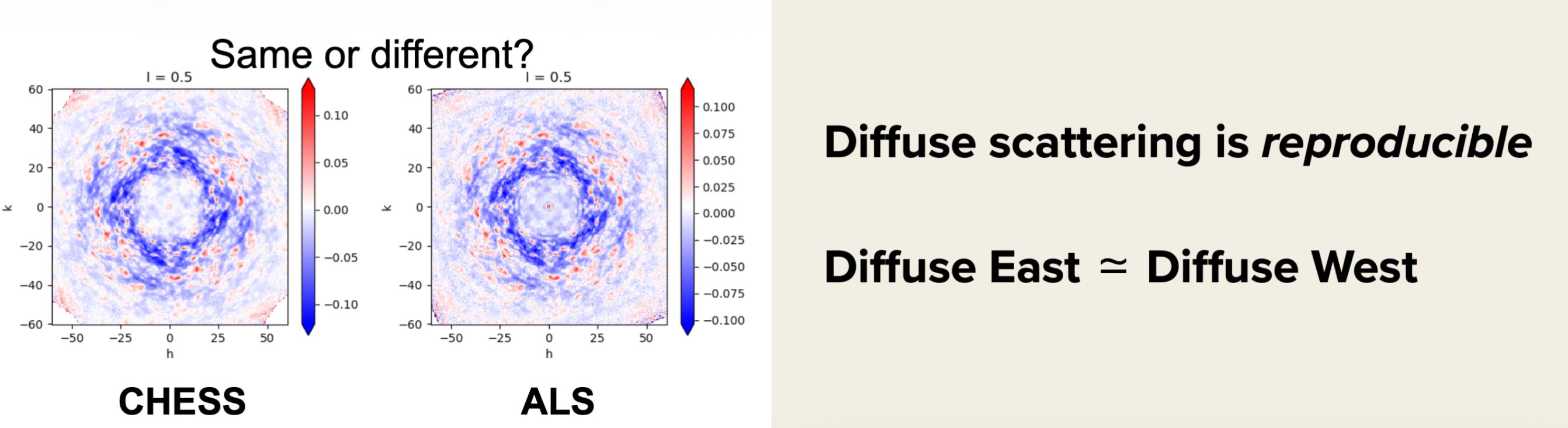

Perhaps the most exciting development is a deceptively simple one: diffuse scattering data collected at CHESS (Cornell) and ALS (Berkeley) are reproducible. This cross-country validation marks a critical step toward making diffuse scattering a routine tool for structural biology.

What Have We Accomplished Since October?

Data Collection

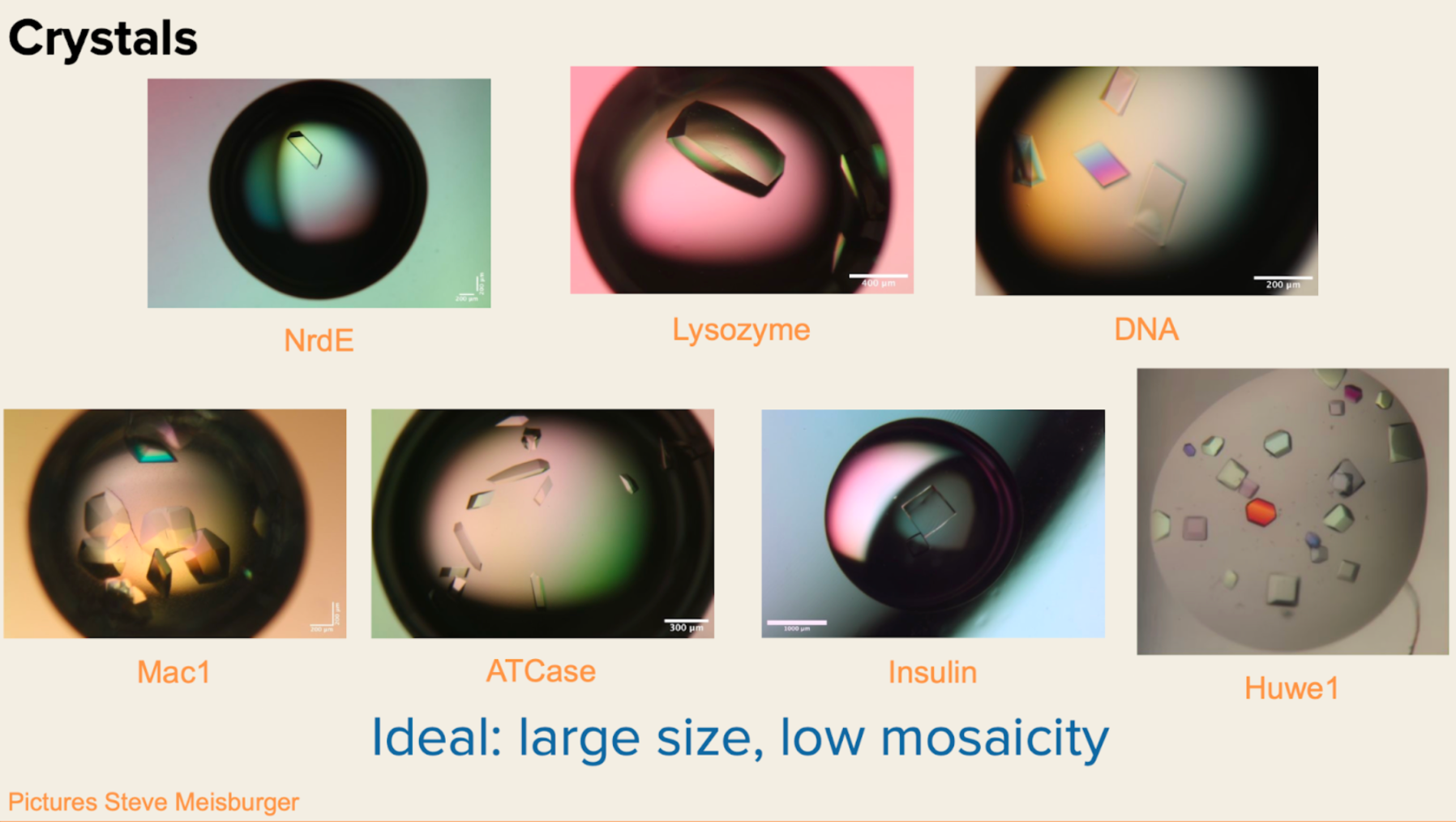

Kara Zielinski (Fraser Lab, UCSF) reported on an intensive fall data collection campaign:

- 9 beamtimes since project inception across two synchrotrons (CHESS and ALS)

- 18 participants contributed to data collection

- 7 protein systems: Mac1, NrdE, Lysozyme, DNA fibers, ATCase, Insulin, and Huwe1

- 129 “good” datasets collected (no data collection errors)

The team systematically explored experimental perturbations:

- Temperature: Data collected at 100K (cryo), 220-275K (intermediate), and 310-315K (elevated), though sample handling for intermediate temperatures requires further optimization

- Ligands: Mac1 + ADPr (11 datasets from CHESS and ALS combined) and Mac1 + small molecule “opener” (6 datasets from ALS)

- Radiation damage mitigation: Vector scans implemented at CHESS to spread dose across radiation-sensitive samples like NrdE and ATCase

Beamline-specific improvements included:

- ALS: Explored dose dependence, wavelength effects, and exposure time optimization; addressed collimator ring scatter issues at 14 keV

- CHESS: Continued X-ray aperture optimization for background reduction

Slide shared at the DiffUSE project’s retreat showcasing crystals of initially tested protein systems.

Slide shared at the DiffUSE project’s retreat showcasing crystals of initially tested protein systems.

Data Processing

Steve Meisburger (Cornell/CHESS) presented major advances in data processing tools and a landmark reproducibility result.

mdx2 is an open-source software package for processing and analyzing diffuse X-ray scattering data. Development has accelerated with a new team (Steve Meisburger, Justin Biel, Joseph Lee) and modern development practices, including version control, issue tracking, and code review. Version 10.3 was released in December 2025 with:

- Containerized deployment via

conda install -c conda-forge mdx2 - Jupyter Lab environment integration

- Live processing capability on Voltage Park during beam times

Reference datasets from CHESS now span multiple systems (Mac1, NrdE, DNA, ATCase, Insulin) with systematic tracking through integration, merging, and fine map generation stages.

The headline result: Diffuse scattering is reproducible between CHESS and ALS. Side-by-side comparisons of Mac1 diffuse maps from both beamlines show consistent features, validating that the signal is robust across different detector systems, beam profiles, and facilities. This East-meets-West reproducibility is foundational for any future multi-site data collection campaigns.

Slides shared at the DiffUSE project’s retreat showcasing reproducibility across beamlines.

Slides shared at the DiffUSE project’s retreat showcasing reproducibility across beamlines.

Additional findings from DNA crystal analysis revealed that correlated disorder differs between room temperature and 100K conditions, even when the static structures appear similar—and that diffuse signal extends beyond the Bragg resolution limit, suggesting untapped information content.

A Galaxy platform prototype was demonstrated, pointing toward a vision of “Cryosparc for diffuse,” making diffuse data processing accessible through a GUI with integrated workflows and interactive visualizations.

Molecular Dynamics Simulations

Mike Wall presented substantial progress on crystallographic MD simulations.

Apo Mac1 baseline results show exceptional agreement between simulation and experiment:

- Total correlation coefficient: CC = 0.96

- Anisotropic correlation coefficient: CC = 0.56

- Simulation: 2×2×2 supercell with OPC3 waters (279,004 atoms), neutron crystal structure 7TX3, 1100 ns unrestrained trajectory

MD optimization methods are advancing on two fronts:

- Enrichment: Selectively removing MD frames to increase diffuse correlation

- Reweighting: Using JAX to optimize frame weights via differentiable Pearson CC maximization. Initial test on experimental diffuse data achieved CC = 0.97 with 47,150 reflections to 3.5 Å resolution (work by Karson Chrispens, documented in a DiffUSE blog post)

Ligand perturbations are now being simulated: Mac1 + ADPr shows distinct diffuse patterns compared to baseline Mac1, with protonation state variations (ASP157 → ASH157) under investigation.

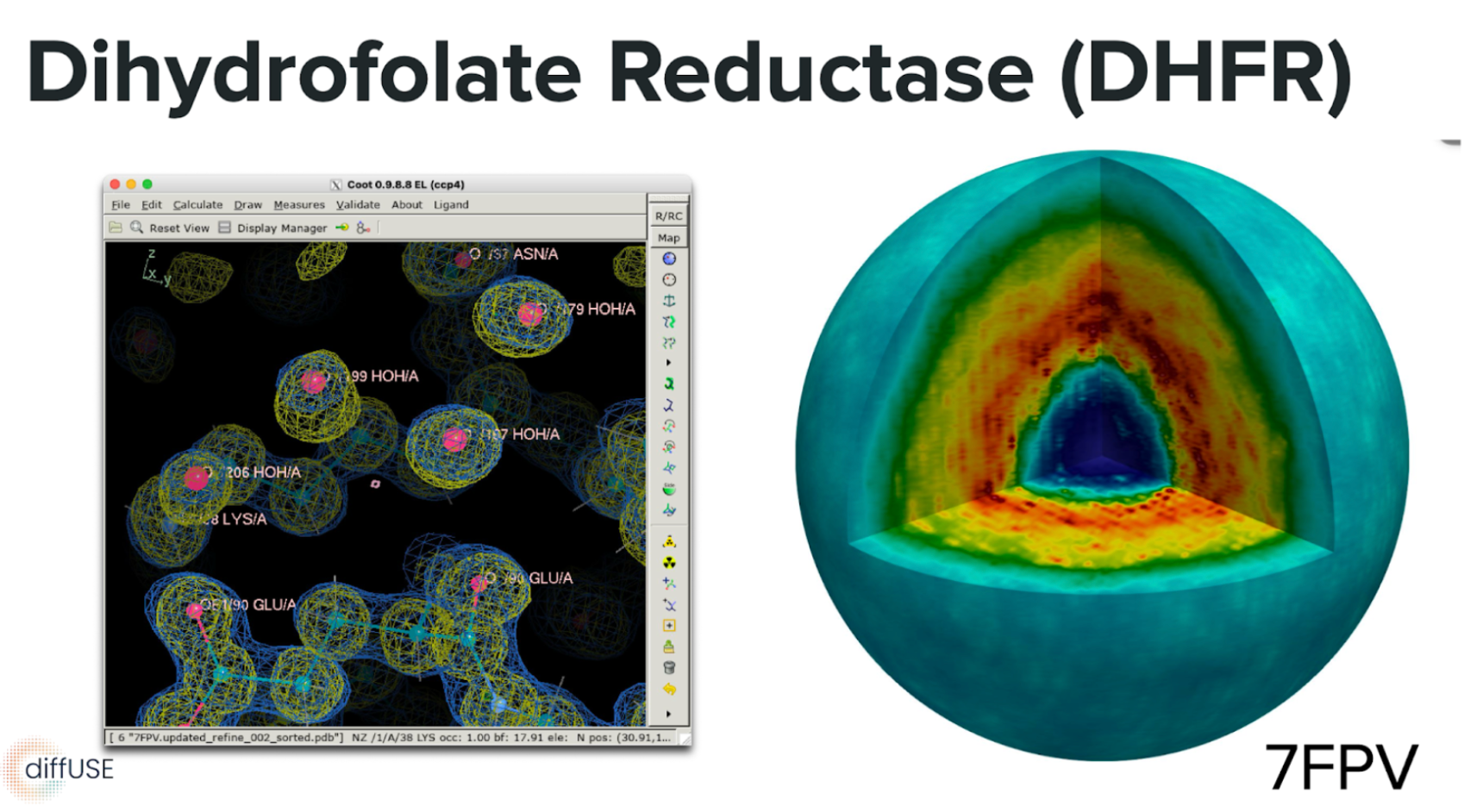

Second system: Dihydrofolate reductase (DHFR, PDB: 7FPV) is being developed as a generalization target, expanding beyond the Mac1 test case.

Simulated diffraction capabilities using nanoBragg (James Holton) enable validation of data processing pipelines—simulated diffuse intensity can be extracted using mdx2, closing the loop between simulation and experiment.

Slides shared at the DiffUSE project’s retreat showcasing simulation results of a new protein system, Dihydrofolate reductase (DHFR, PDB: 7FPV).

Slides shared at the DiffUSE project’s retreat showcasing simulation results of a new protein system, Dihydrofolate reductase (DHFR, PDB: 7FPV).

Machine Learning Modeling

Marcus Collins presented the ML modeling roadmap focused on using experimental data to reveal hidden protein conformations.

Key insight: Current ML structure predictors (AlphaFold3-like models, including Boltz-2, Protenix, RF3) do not reliably predict alternate conformations (altlocs) even with multiple random seeds, indicating they have not learned about underlying ensembles. This gap motivates developing density-guided ensemble generation (Sampleworks).

Density guidance approach: The team is implementing training-free guidance from experimental density maps (2Fo-Fc), using the difference between experimental and calculated maps to steer diffusion model sampling toward conformations consistent with crystallographic data. Early results are promising but mixed: Boltz-2 with density guidance can capture both altlocs in some test cases like PTP1B (6B8X), though performance varies across systems.

Sampleworks pipeline is being built as a plug-and-play guidance framework to use different structure prediction models, experimental data, and guidance strategies.

- Model wrappers implemented for RF3, Protenix, Boltz-1, and Boltz-2 (MD and X-ray modes)

- Initial test set of ~50 structures from PDB prepared with altlocs; electron density maps being generated

- Evaluation metrics: RSCC, LDDT, clash scores, backbone and sidechain geometry

Water modeling emerges as a critical challenge for advancing to reciprocal space. Our first attempt is to improve the modeling of explicit solvent. Current models achieve ~0.3 precision/recall at 0.5 Å—insufficient for improving Rwork/Rfree. The team is exploring flow-matching approaches and evaluating whether a single unified model or separate protein/water models will be more effective. Ordered waters coupled to protein altlocs are particularly important targets.

Infrastructure and Publishing

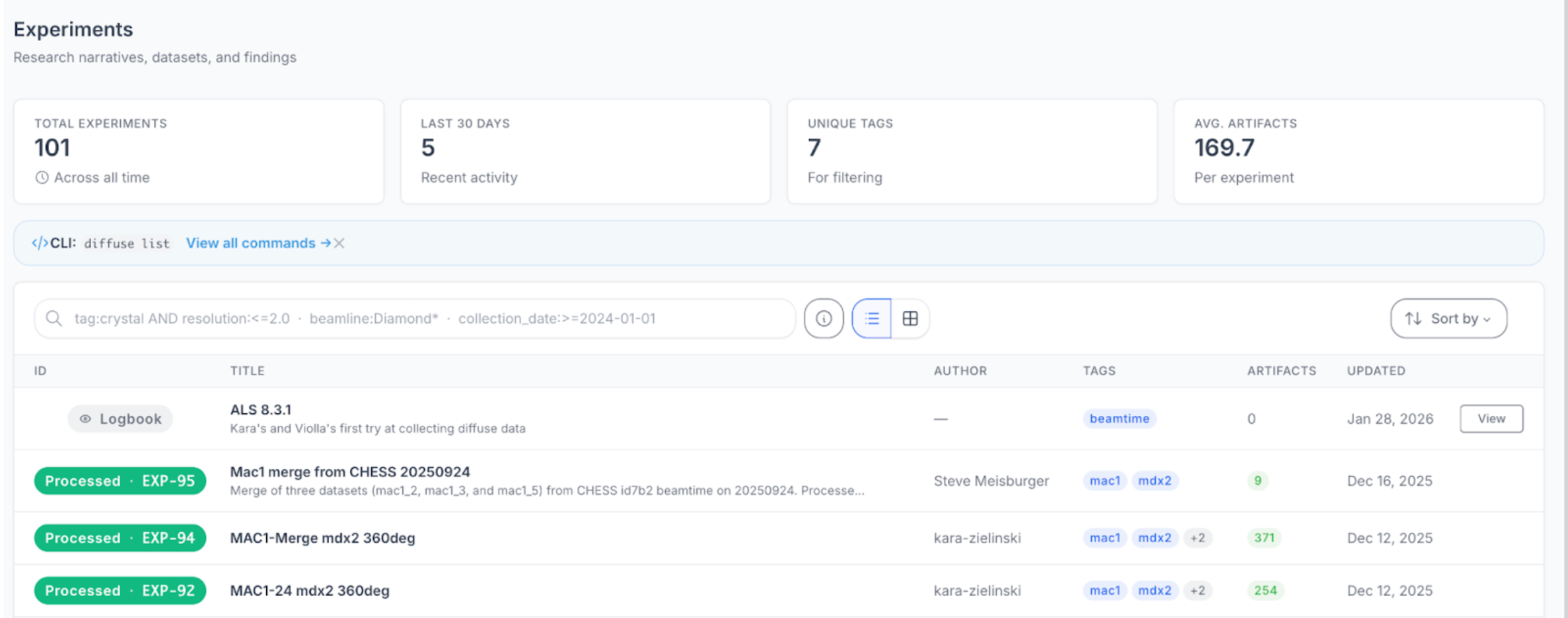

Justin Biel presented the computational infrastructure supporting DiffUSE, built around a three-pillar model: Data, Compute, and Publishing.

Compute Infrastructure uses Voltage Park as the backbone:

- H100 SXM5 GPUs available via bare metal (8× GPU configurations)

- Two usage patterns supported:

- Workspaces: Interactive environments for experimental work, debugging, and visualization

- Workflows: Hardened, scalable pipelines for production analysis

- The DiffUSE web app now provides resource checkout, visibility into running resources, and SSH/Jupyter access

- Custom container management enables workspace pausing and environment customization

- Workflow orchestration via Prefect and Docker

Data Infrastructure centers on the DiffUSE web app:

- Storage: Core Backblaze storage (S3-compatible) with OSN bucket integration for beamline data

- Access: Automatic mounting to Voltage Park resources, plus web app download, CLI, Python SDK, and API

- Metadata: Experiments have artifacts, optional markdown content (like logbook entries), relationships to other experiments, and tags

- Automation: Beam-trip data automatically triggers experiment registration; dataset files populate metadata fields

- Governance: Standards compliance checking, staging-to-public workflows, DOI attachment decisions

Publishing workflow discussions focused on:

- When to stage data privately vs. make everything open immediately

- When to attach DOIs (content should be largely immutable)

- External database destinations: SBGrid Databank, PDB, Zenodo

Open Science

Prachee Avasthi (Head of Open Science, Astera) led a discussion on publishing expectations and open science practices. Discussion explored barriers to sharing, evidence of downstream reuse, orphan artifacts without ideal homes, and prioritization of unaddressed data sharing issues.

Reflections on Our Distributed Model

This retreat underscored how the diffUSE’s distributed structure works. By embedding team members across institutions (Cornell, UCSF, Berkeley Lab, and beyond) we maintain direct access to beamlines, computational expertise, and scientific communities that would be impossible to replicate in a single location. The “Diffuse East ≈ Diffuse West” result is itself a product of this model: data collected by different teams at facilities 2,500 miles apart, processed with shared tools, yielding consistent results. Our infrastructure investments (the DiffUSE web app, Voltage Park compute, standardized containerized environments) bridge the geographic gaps, allowing a scientist at Cornell to spin up the same analysis environment as a colleague in California.

The in-person retreat revealed how much asynchronous collaboration had already accomplished, sessions focused on integration and next steps rather than catching people up. Open science practices (shared logbooks, blog posts, open repositories) keep everyone aligned between meetings. The challenge ahead is scaling this approach: as we add systems, datasets, and collaborators, maintaining the coherence that makes distributed work effective will require continued investment in documentation, automation, and the human connections that make a dispersed team feel like one group working toward a shared goal.

The science described here represents the output of a significant and coordinated resource investment. Since DiffUSE’s start in July, Astera has committed $3.2M to stand up the project: $2.63M in research grants distributed directly to our partner labs at Fraser Lab/LBL, Ando Lab/CHESS, and Wankowicz Lab, $567K in Astera personnel and contractor support, and $30K in computational infrastructure. On top of this, CHESS contributed an estimated $700K in beamtime, bringing the total resource investment to roughly $3.9M. Looking ahead, an additional $2.4M is projected for 2026 as the project scales toward its core scientific goals.

A screenshot from our data management infrastructure, demonstrated at the retreat. This is in active development with Prophet Town and Voltage Park.

A screenshot from our data management infrastructure, demonstrated at the retreat. This is in active development with Prophet Town and Voltage Park.

Mike Wall presents progress on MD optimization of diffuse scattering to a full house at the Astera Institute.

Mike Wall presents progress on MD optimization of diffuse scattering to a full house at the Astera Institute.

What’s Next?

Data Collection (3-month goals)

- Collect data on additional systems; collect lysozyme at ALS

- Optimize sample handling for intermediate temperatures (oil-based approaches)

- Explore serial crystallography approaches (chip types, small wedges, crystal size variation)

- Continue investigating cryo options (traditional, NANUQ, high-pressure cryocooling)

Data Processing (2026 goals)

- Improve mdx2 performance (~2× speedup)

- Implement GOODVIBES and DISCOBALL in Python (JAX)

- Fully explore serial crystallography processing

- Deploy on Ando lab Galaxy server; add mdx2 tools

- Develop “Cryosparc for diffuse” project roadmap

MD Simulations

- Continue model/data comparisons and refine MD models (protonation states, parameterization)

- Expand to new systems and additional ligand/mutation perturbations

- Explore how MD optimizations can support other DiffUSE activities (ML modeling, diffraction image simulation, data processing validation)

ML Modeling

- Scale up Sampleworks evaluation across initial test set

- Improve water prediction models (retrain SuperWater with better data, explore flow matching vs. diffusion)

- Quantify water model precision requirements by systematically perturbing well-supported waters

- Progress toward reciprocal space/Bragg peak guidance, ultimately targeting diffuse data guidance

Infrastructure

- Finalize containerized workspace management with pause/resume capability

- Expand workflow orchestration options

- Refine data governance workflows for staging → public → external database publication

Open Science

- Address identified barriers to sharing

- Establish timelines for DOI attachment and external database deposition

- Continue documentation through blog posts and logbooks

Special thanks to Astera for hosting the retreat in Emeryville.

Glossary

| Acronym | Definition |

| ADPr | Adenosine diphosphate ribose (a ligand) |

| ALS | Advanced Light Source (synchrotron at Lawrence Berkeley National Laboratory) |

| API | Application Programming Interface |

| ASH | Protonated aspartic acid residue |

| ASP | Aspartic acid residue |

| ATCase | Aspartate Transcarbamylase (enzyme) |

| CC | Correlation Coefficient |

| CHESS | Cornell High Energy Synchrotron Source |

| CLI | Command Line Interface |

| DHFR | Dihydrofolate Reductase (enzyme) |

| DOI | Digital Object Identifier |

| GPU | Graphics Processing Unit |

| GUI | Graphical User Interface |

| JAX | Just After eXecution (Google's autodiff/ML library for Python) |

| keV | Kiloelectronvolt (unit of X-ray energy) |

| LDDT | Local Distance Difference Test (structure quality metric) |

| Mac1 | Macrodomain 1 (SARS-CoV-2 nonstructural protein 3) |

| MD | Molecular Dynamics |

| ML | Machine Learning |

| NrdE | Ribonucleotide Reductase class Ib alpha subunit (enzyme) |

| ns | Nanoseconds |

| OPC3 | Optimal Point Charge 3-point water model |

| OSN | Open Storage Network |

| PDB | Protein Data Bank |

| PTP1B | Protein Tyrosine Phosphatase 1B (enzyme) |

| RF3 | RoseTTAFold 3 (structure prediction model) |

| Rfree | Free R-factor (crystallographic validation metric) |

| Rwork | Working R-factor (crystallographic refinement metric) |

| RSCC | Real Space Correlation Coefficient |

| S3 | Simple Storage Service (cloud storage protocol) |

| SBGrid | Structural Biology Software Grid (consortium) |

| SDK | Software Development Kit |

| SSH | Secure Shell (network protocol) |

| UCSF | University of California, San Francisco |